While quantum computing has been growing rapidly in the last decade, the concepts underneath the technology are not new. Quantum mechanics, a branch of modern physics that delves into the behavior of the smallest particles in the universe, began its journey in the late 19th century and has continued to evolve ever since.

Discoveries by many scientists in this relatively new field were controversial in the early days. Einstein himself hated the idea of entanglement. However, over time, the principles of quantum mechanics have been repeatedly validated through experiments and have become the foundation for many modern technologies, including quantum computing.

Curious about the future of quantum technology? Head over to BTQ’s Quantum 101 page to learn more about key terms in the quantum computing space.

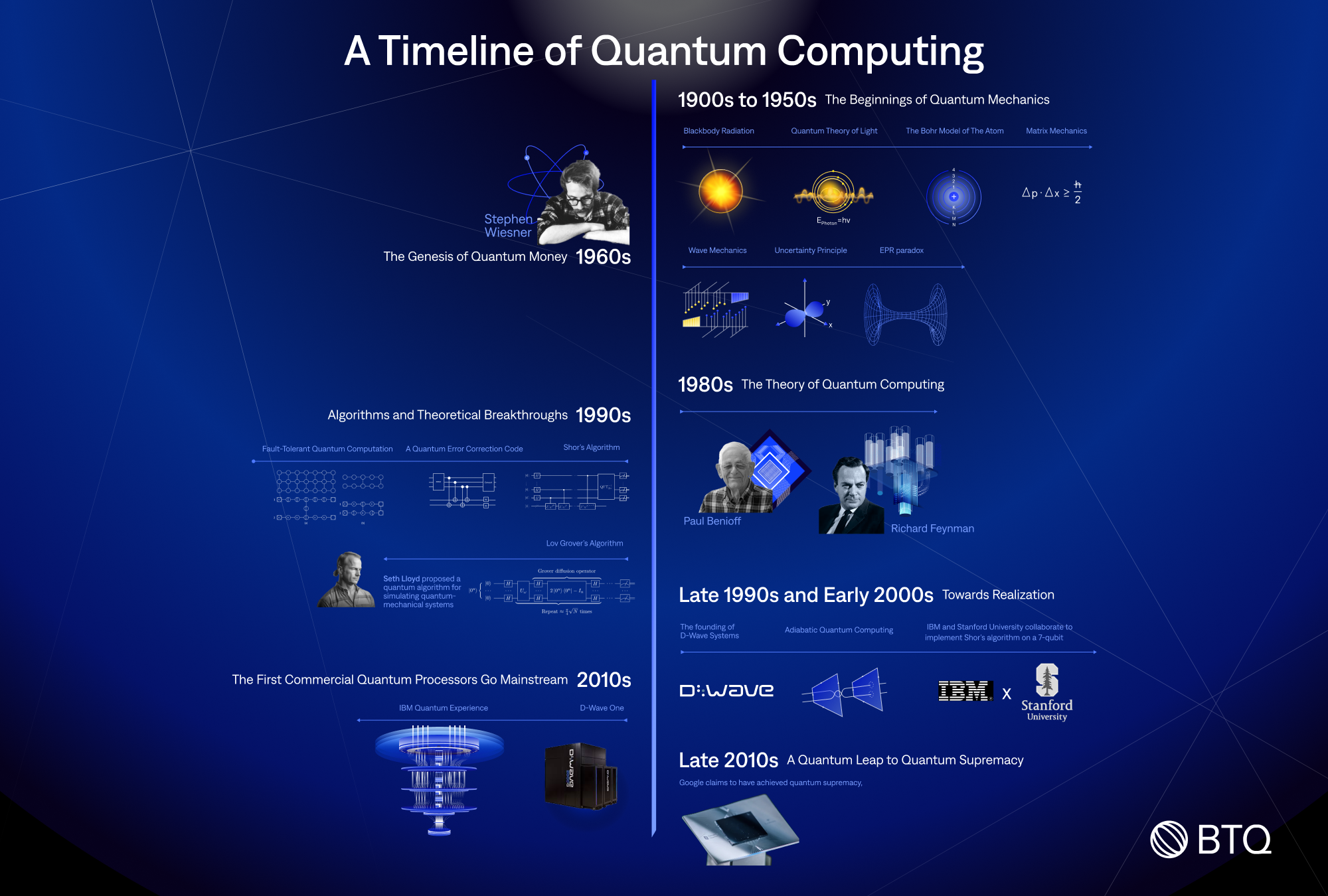

The 1900s to 1950s: The Beginnings of Quantum Mechanics

Early quantum theory developed by physicists such as Max Planck, Albert Einstein, Niels Bohr, Werner Heisenberg, and Erwin Schrödinger, laid the groundwork for quantum mechanics.

1900: Max Planck introduced the concept of quantized energy to explain the emission spectrum of black bodies, marking the birth of quantum physics.

1905: Albert Einstein proposed the photon theory of light, suggesting that light behaves as both a wave and a particle, and explained the photoelectric effect using the concept of light quanta.

1913: Niels Bohr introduced the Bohr model of the atom, which postulated that electrons orbit the nucleus in discrete energy levels, with transitions between levels resulting in the emission or absorption of photons.

1925: Werner Heisenberg developed matrix mechanics, the first formulation of quantum mechanics, which described the behavior of quantum systems using matrices and operators.

1926: Erwin Schrödinger developed wave mechanics, an alternative formulation of quantum mechanics that described quantum systems using wave functions. Schrödinger's equation became a fundamental tool for describing the behavior of quantum particles.

1927: Heisenberg introduced the uncertainty principle, which states that the position and momentum of a quantum particle cannot be simultaneously measured with arbitrary precision.

1935: Einstein, Boris Podolsky, and Nathan Rosen proposed the EPR paradox, a thought experiment that highlighted the seemingly paradoxical nature of quantum entanglement.

The 1960s: The Genesis of Quantum Money

1969: Physicist Stephen Wiesner was the first person to propose the idea of “quantum money." Quantum money is a currency whose authenticity is backed by the laws of quantum mechanics. In other words, quantum money can be thought of as a currency issued with qubits stored in complementary bases and made counterfeit-proof by virtue of the no-cloning theorem.

The idea was for each bill to store a few dozen polarised photons and ensure that only the bank knows the polarisation of these photons. Since it’s impossible to copy quantum states, anyone who wants to check the authenticity of a bill will have to take it to the issuing bank, which can confirm its authenticity using prior knowledge of the bill’s polarisation.

The 1980s: The Theory of Quantum Computing

The concept of quantum computing emerged in the early 1980s with two key proposals:

1980: Paul Benioff, a physicist at Argonne National Laboratory, proposed the idea of a quantum mechanical model of a Turing machine, which could perform computations using quantum mechanical principles. This idea laid the theoretical groundwork for quantum computing.

1982: Richard Feynman, a renowned physicist at Caltech, independently proposed the idea of a quantum computer during his talk at the First Conference on the Physics of Computation at MIT. Feynman suggested that a computer based on quantum principles could efficiently simulate quantum systems, a task that is exponentially difficult for classical computers.

The 1990s: Algorithms and Theoretical Breakthroughs

1994: A groundbreaking year, as mathematician Peter Shor presents an algorithm capable of factoring large numbers, which is the foundation of our modern encryption system. Known as Shor’s algorithm, it theoretically demonstrates how a quantum computer can efficiently solve the mathematical problems that underlie our current encryption standards in a matter of 100 seconds, compared to the 1 billion years it will take classical computers, and breach our encryption standards in the process. When Shor’s algorithm is run on a quantum computer with sufficiently large qubits, it can break the security and safety RSA and ECC offer, rendering both useless.

1995: Peter Shor discovered the first quantum error correction code that uses a series of controlled operations to encode one logical qubit across nine physical qubits in a specific pattern. “Error correction is the detection of errors and reconstruction of the original, error-free data.”[*] In quantum computing, where quantum states are fragile and decohere quickly, error correction is even more vital for quantum computing.

Shor’s error correction code uses this pattern to detect whether a bit flip or phase flip error has occurred on a particular qubit and correct the error without having to measure the logical qubit itself directly. Shor’s code performs its error correction by measuring error syndromes using adjacent qubits. By analyzing these syndromes, the code can ascertain the error type and its location and apply targeted corrective measures, while the quantum information in the logical qubits remains preserved through this process.

1996: Dorit Aharonov and Michael Ben-Or (and independently Manny Knill, Raymond Laflamme, and Wojciech Zurek) proposed a way to perform fault-tolerant quantum computation, which allows for arbitrary long quantum computation when the physical error rate is below a threshold value. This proposal improved on Shor’s method of performing fault-tolerant quantum computation.

1996: Lov Grover introduced an algorithm designed to significantly improve the efficiency of searching through unsorted or unstructured databases. Later named after its creator, Grover’s algorithm promises to outpace its classical counterpart by solving search problems exponentially faster.

1996: Seth Lloyd proposed a quantum algorithm for simulating quantum-mechanical systems, expanding the potential applications of quantum computing. This proposal came after Seth Lloyd proved Feynman's 1982 conjecture – that quantum computers can be programmed to simulate generic quantum systems – to be correct.

The Late 1990s and Early 2000s: Towards Realization

1999: The founding of D-Wave Systems by Geordie Rose marks a pivotal step towards commercial quantum computing. The company is headquartered in Burnaby, British Columbia, Canada, and has been working on developing and commercializing quantum computing technology. D-Wave Systems is widely recognized as the world's first company to offer commercial quantum computers. They developed a type of quantum computer known as a quantum annealer, which is designed to solve optimization problems.

2000: Eddie Farhi at MIT introduced the concept of adiabatic quantum computing, further diversifying the approaches to quantum computation. While the gate-based (or digital) model is the “universal” approach to quantum computing, where the idea is a gate-based quantum computer having a similar working principle with a classical computer that uses logic gates, adiabatic quantum computing is a model of quantum computing that depends on the adiabatic theorem for computations.

2001: IBM and Stanford University researchers collaborate to successfully implement Shor’s algorithm on a 7-qubit processor. The researchers employed an early kind of quantum computer called a liquid state nuclear magnetic resonance (NMR) quantum computer, which has a 7-qubit processor made from billion billion (1018) custom-designed molecules, to successfully factor the number 15.

2010s: The First Commercial Quantum Processors Go Mainstream

2011: D-Wave Systems announced the release of D-Wave One, the first commercial quantum annealer, signifying a major leap from theory to practice. D-Wave One operates on a superconducting 128-qubit processor using quantum annealing, which makes it possible to solve certain computing optimization problems by designing the problem so that when the quantum computer attains its minimum energy state, it will be capable of solving it.

2016: IBM makes a historic move by making quantum computing accessible on the IBM Cloud, opening up new possibilities for research and development. This platform is called IBM Quantum Experience, a cloud-based quantum computing platform that allows users to run algorithms and experiments on IBM's 5-qubit quantum processor, work with individual qubits, and explore tutorials and simulations around the possibilities that quantum computing presents.

The Late 2010s: A Quantum Leap to Quantum Supremacy

2019: Google claims to have achieved quantum supremacy, a term coined by John Preskill in 2012 to describe the point at which quantum computers can perform tasks that surpass the capabilities of classical computers.

Google's Sycamore, a 53-qubit quantum computer, performed a quantum random circuit sampling task. In this task, a quantum computer generates a probability distribution by applying a sequence of random quantum gate operations to a set of qubits and then measuring the resulting state. The circuit included 430 single-qubit gates and 44 two-qubit gates, with a depth (number of gate cycles) 20. The goal is to produce a set of bitstrings (sequences of 0s and 1s) with probabilities that closely match the theoretical predictions.

Google claimed that sampling the output of this quantum circuit just once would take 10,000 years on a state-of-the-art classical supercomputer, while Sycamore, thanks to its quantum properties, was able to perform the sampling task in just 200 seconds.

However, some experts in the field have debated the specific details of the task and the claimed speedup over classical computers. IBM, in particular, argued that an optimized classical simulation could perform the same task in just 2.5 days, rather than the 10,000 years claimed by Google.

Despite these debates, Google's achievement represents a significant milestone in the development of quantum computing and has sparked further research and investment in the field.

Today: The Quantum Present

As of today, quantum computers are often likened to the early days of classical computing, analogous to the vacuum tube era. Today, we are still in the NISQ (Noisy Intermediate-Scale Quantum) era. NISQ computers are immensely difficult to stabilize and are unreliable for the execution of major computing tasks that are of commercial importance.

Though it may take a couple of years before general-purpose quantum computers can be applied to solving diverse practical problems, the recent breakthroughs in neutral atom quantum computing, superconducting qubits, and photonics means we are stepping closer to the era of quantum computing outstripping classical computers. But before this can happen, quantum computers will require thousands of qubits, which poses the challenge of scaling up. Nevertheless, we stand at the threshold of developing useful and scalable quantum computers, ready to unlock solutions to problems in pharmaceuticals, materials, fundamental physics, and even machine learning and optimization for industrial applications across the world.